- Model description

- P-TON is hybrid model for advance garment transfer with all intricate design, patterns, motif preserves.

- Benchmarked capabilities

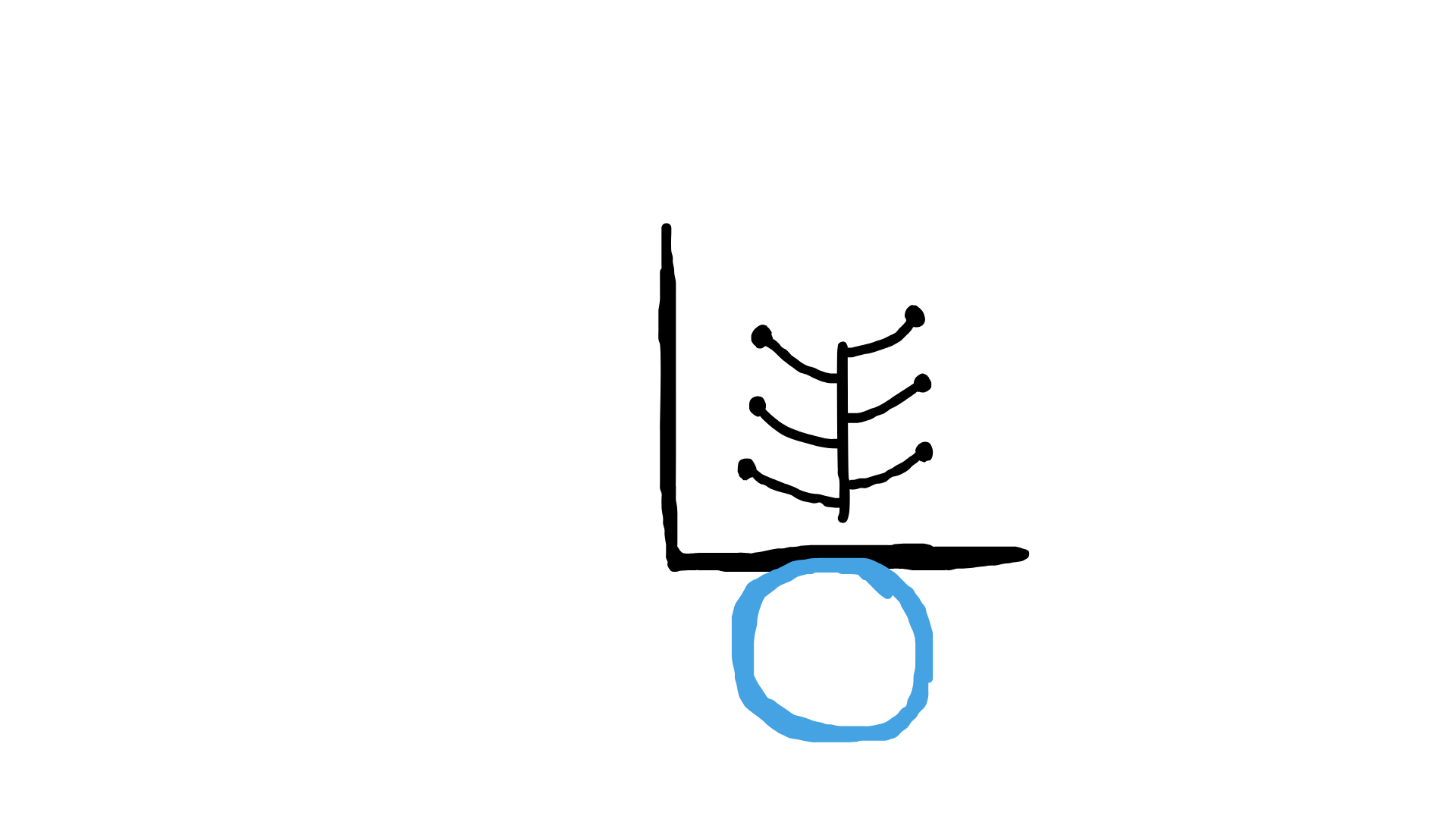

- P-TON is evaluated on internal fashion benchmarks covering garment-transfer fidelity, silhouette consistency across poses, pattern and motif preservation, drape realism, and color accuracy on reference-based generation.

- Acceptable uses

- See our Usage Policy.

- Release date

- November 2025

- Access surfaces

- PatternAI Web App

- PatternAI API

- AWS deployment

- Google Cloud deployment

- Azure deployment

- Software integration guidance

- See our developer documentation.

- Modalities

- P-TON accepts image, reference, and text inputs and produces high-resolution image outputs. It supports garment transfer across a wide range of silhouettes with consistent pattern, motif, and drape preservation.

- Knowledge cutoff date

- May 2025. The model is highly reliable for information and events up to this date.

- Software and hardware used in development

- Cloud resources from AWS and GCP with frameworks such as PyTorch.

- Model architecture and training methodology

- Pretrained on proprietary mixed datasets and post-trained using safety-alignment methods including human and AI feedback loops.

- Training data

- A proprietary mix of publicly available web data (up to cutoff), licensed third-party data, contractor-labeled data, opted-in user data, and internal synthetic data.

- Testing methods and results

- Based on our assessments, we deployed P-TON equivalent safeguards with additional post-deployment monitoring.